AI in enterprise DFIR: Moving fast, staying defensible

Key insights

- More than two thirds of enterprise DFIR teams are now using AI in their investigations. The conversation has moved on from whether to adopt it to whether you’re using it responsibly.

- The same AI capabilities helping investigations move faster are also being used by adversaries to make attacks harder to detect.

- AI is most effective when it handles scale and pattern recognition. The judgment calls still belong to investigators.

- Teams that can clearly explain where AI was used, how outputs were validated, and how evidence was handled will be best positioned to defend their decisions.

AI adoption in enterprise digital forensics and incident response (DFIR) has reached a tipping point. Data from the 2026 State of Enterprise DFIR report, drawing on insights from more than 350 enterprise DFIR professionals, shows that 68% are now using AI as part of their investigations — a more than threefold increase from just two years ago. That signals AI has crossed from emerging capability to mainstream adoption among DFIR teams. But the more revealing number is the other 32%.

Why 32% of enterprise DFIR teams still haven’t adopted AI

These aren’t organizations unaware of AI and what it can do. The concerns cited by non-adopters are legitimate: questions around result validity, legal exposure, data security, and the handling of sensitive or personal information. These are exactly the questions a DFIR professional should be asking before introducing any new capability into investigations that may ultimately be scrutinized by regulators, courts, or boards.

The challenge is that waiting carries its own risk, and in 2026, that risk is growing.

AI is reshaping both attacks and investigations

One of the most significant findings in this year’s report is the dual role AI now plays in enterprise digital investigations.

In the hands of DFIR teams, AI has become a force multiplier — enabling investigators to process larger volumes of data, surface patterns more quickly, and scale their work in ways that were not previously possible. At the same time, those same capabilities are being used by adversaries to design attacks that are faster, more convincing, and difficult to detect using traditional methods.

AI-powered attacks were cited as the top issue making cyberattacks more challenging. Phishing and business email compromise, already the most frequently investigated incident types, are becoming harder to flag precisely because AI-generated content has narrowed the gap between legitimate and fabricated communications.

For enterprise DFIR leaders, the implication is direct: the equation has changed. Teams that delay AI adoption are increasingly operating at a disadvantage relative to both the threats they face and the investigative workloads they are expected to handle.

In many cases, the risk of not using AI is now outweighing the risk of using it responsibly.

Where AI fits and where it doesn’t

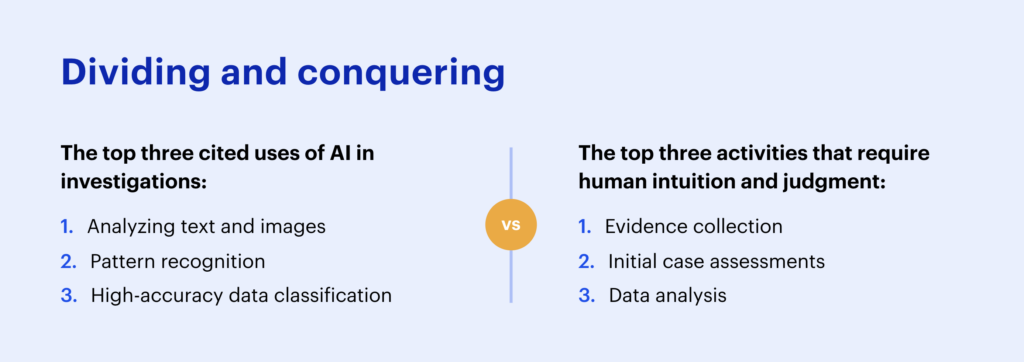

The report is also clear about where the line sits. Respondents indicated they most commonly use AI to analyze text and images, recognize patterns, and classify data with high accuracy.

Evidence collection, initial case assessments, and data analysis, meanwhile, are the tasks investigators still rate as requiring human intuition above all else. AI handles volume and pattern. Investigators make judgment calls.

That distinction becomes concrete when you see it in practice.

Using AI in an enterprise investigation

Consider a scenario that enterprise teams encounter regularly: an alert fires suggesting unusual network activity — connections from IP addresses associated with geographies where the organization has no employees or operations. The security team suspects a potential intrusion, but the volume of investigative data to review is significant. Traditionally, establishing what happened — and when — could take days of manual review before the team has enough insight to brief leadership or make a containment decision.

After remotely collecting data from the affected host using Magnet Axiom Cyber or Magnet Nexus and loading it into Magnet Review, investigators can turn to Intelligent Insights, powered by Magnet AI, to run a plain language query across the collected evidence, surfacing potentially suspicious IPs and summarizing where and when they appear.

Intelligent Insights conducts multiple concurrent analyses across evidence types and returns a structured summary: flagged IPs, connection patterns, and suggested next lines of inquiry, all grounded in citations linked directly to the underlying artifacts.

None of this replaces the investigator. Intelligent Insights generates leads — not conclusions. Every finding is validated against source artifacts before it informs investigative judgment or downstream actions, such as determining the scope of an incident, briefing the CISO, or supporting decisions made by incident response, legal, or risk teams. Every output is explainable and every citation verifiable.

Why governance is the defining issue

That kind of speed is only sustainable if the governance structures around it are sound.

Digital investigations have already passed through two major eras. The first focused on file system forensics, which was a manual, painstaking process of digging through directories and sectors.

The second emphasized artifacts and human activity: messages, locations, application data, and browsing history.

We are now entering a third era, defined not just by AI surfacing artifacts, but by AI capable of identifying relationships, context, and investigative relevance across them.

For DFIR leaders, the question is no longer whether to embrace where the field is heading — it’s whether the governance structures exist to do so responsibly. That means being able to answer questions from legal teams, executives, regulators, or opposing counsel:

- Which tasks were AI-assisted?

- What models were used, and under what controls?

- How were outputs validated before informing investigative decisions?

- What data was processed — and was it handled in line with applicable privacy requirements?

The picture in this year’s data is clear. The teams that will be best positioned are those that can answer those questions confidently — because they adopted AI deliberately and responsibly, governed it carefully, and kept human judgment at the center of every decision.

Read the full 2026 State of Enterprise DFIR Report

Get the full picture in the 2026 State of Enterprise DFIR Report — covering AI, real-time collaboration, mobile evidence, and the expanding DFIR toolkit.